In this first post in a series of three on Detecting AI-Generated Images, we explore foundational forensic methods for determining whether AI generated an image, focusing on metadata and file-signature analysis [1 , 2 ]. These time-tested and cutting-edge techniques form the bedrock of a layered approach to image authentication, equipping lawyers and investigators with practical tools to flag synthetic imagery early in the investigative process [2 , 3 ]. We begin with EXIF and header inspections, then move to pixel-level analyses, sensor fingerprinting, and finally AI-driven detectors that learn the statistical “fingerprints” of generative models [4 , 5 ]. While no single technique is foolproof, combining these methods provides robust evidence—each layer helping to confirm or refute AI origin [6 , 7 ].

Metadata & File Signature Analysis

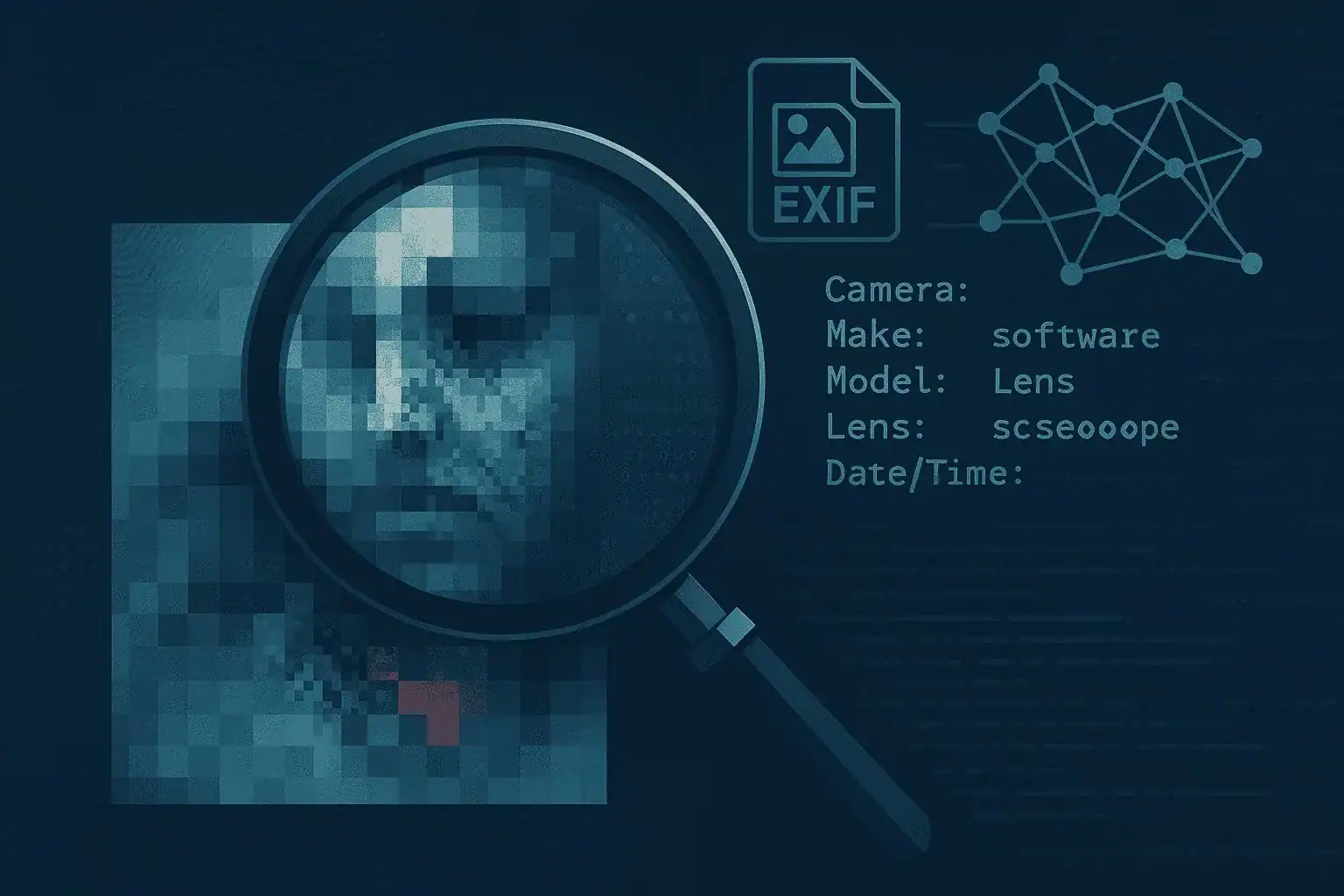

Detecting AI-Generated Images often starts with investigators examining EXIF metadata and file signatures to look for clues that an image is genuine or AI-generated [1 , 2 ]. Authentic camera images typically include details such as camera make/model, lens ID, timestamp, and sometimes GPS coordinates within the EXIF data, whereas many AI-generated images lack these fields or contain odd entries—such as a “Software” tag referencing generative tools [1 , 2 ]. Tools like ExifTool, FotoForensics, JPEGsnoop, and ReconEXIF provide investigators with the means to extract and analyze metadata and detect header anomalies [2 , 8 ]. However, because malicious actors can strip or falsify metadata, these observations alone aren’t conclusive; they serve as useful leads within a broader forensic workflow [9 , 1 ]. This approach is also essential for Spotting AI-Synthesized Images and Identifying AI-Generated Photos in legal and investigative contexts.

Visual Artifacts & Error-Level Analysis

Error-Level Analysis (ELA) highlights areas of an image with differing compression levels, revealing potential over-smoothed or composite regions that may indicate AI synthesis [2 , 4 ]. Under ELA, synthetic patches often appear as distinct blocks or smear patterns compared to authentic regions, guiding investigators to suspect manipulated sections [4 , 3 ]. Beyond ELA, analysts scrutinize shadows, reflections, and fine details for inconsistencies—such as perspectively incorrect shadows or mismatched reflections—that high-quality AI generators can still struggle to reproduce faithfully [3 , 10 ]. Visual inspection for these anomalies complements automated checks and can be particularly effective when combined with annotated evidence that experts present in court [10 , 2 ]. Spotting AI-Synthesized Images and Identifying AI-Generated Photos both benefit from these visual forensic techniques.

Sensor-Noise Fingerprinting (PRNU)

Photo-Response Non-Uniformity (PRNU) fingerprinting leverages the unique noise pattern each camera sensor leaves on images to verify origin and detect forgeries [11 , 5 ]. By comparing the PRNU extracted from a suspect image against a reference fingerprint derived from known camera captures, investigators can determine with statistical rigor whether the image originated from the claimed device [5 , 12 ]. AI-generated images, lacking a genuine sensor fingerprint or bearing inconsistent synthetic noise, fail to match a camera’s PRNU, providing strong evidence of artificial origin [4 , 11 ]. While PRNU analysis is a well-established forensic method, researchers are exploring advanced denoising and Bayesian techniques to improve noise extraction from single images and videos [12 , 13 ]. This method is a cornerstone of AI-Generated Image Detection.

AI-Based Classifiers

Researchers train machine-learning classifiers on large datasets of real and synthetic images, creating an emerging class of forensic tools designed to spot the subtle statistical fingerprints of generative models [6 , 7 ]. These detectors analyze frequency artifacts, color channel correlations, and patch-level inconsistencies that human experts might miss, achieving high accuracy in controlled settings [6 , 14 ]. However, this approach is inherently part of an arms race: adversarial modifications—such as slight noise additions or image blurring—can defeat many classifiers, necessitating continuous retraining and validation [14 , 15 ]. Consequently, investigators should view AI-based detection tools as complementary to traditional forensic methods rather than as standalone evidence [2 , 6 ]. AI-Generated Image Detection and Detecting AI-Generated Images both require ongoing adaptation to new generative techniques.

For a broader overview of the deepfake problem from a forensics perspective, see another one of our blog posts: Deepfake Defense (https://lucidtruthtechnologies.com/deepfake-defense/ ).

References

- Fake or Real? Detecting AI Images from a Forensic Lens

- How to identify fake images - Hawk Eye Forensic

- Photo forensics from lighting shadows and reflections

- AI-Based Fake Image Detection using Digital Forensic Imaging Techniques

- Determining Image Origin and Integrity Using Sensor Noise

- FakeScope: Large Multimodal Expert Model for Transparent AI-Generated Image Forensics

- Awesome AI-generated Image Detection - GitHub

- ReconEXIF - Forensic Metadata Extraction Tool - GitHub

- International Journal of Multidisciplinary

- How To Reveal AI-Generated Images By Checking Shadows And Reflections …

- Digital Image Forensics Using Sensor Noise

- A comparison study of CNN denoisers on PRNU extraction

- GitHub - sim-pez/prnu: Extract camera fingerprint using different types …

- A Practical Synthesis of Detecting AI-Generated Textual, Visual, and …

- Discerning Reality from AI: How to Distinguish Genuine Photos from AI …